We give each task an ID, set it to call the appropriate function and tell it to run against the dag we created above. You can also write your own.įor simplicity, task1() and task2() just return a string, but they could run a SQL query and save the output, crunch some data, start a model, scrape a website, or perform pretty much any task you can think of. Here we’re using the PythonOperator() which runs Python code, but there’s also a BashOperator(), a MySQLOperator, an EmailOperator(), and various other operators for running different types of code. Operators usually run independently and don’t share information, so you’ll need separate ones for each step in your process. In Airflow, each task is controlled by an operator. We’ll import DAG and the PythonOperator and BashOperator from operators so we can run both Python and Bash code.įinally, we will create the DAG tasks. The first step to creating a DAG is to create a Python script and import Airflow and the Airflow operators and other Python packages and modules you need to run the script. Import the packages and modulesĪ DAG is basically a definition file that tells Airflow what task to perform.

To see how DAGs work, let’s start with a really basic example. Since DAGs are based on Python scripts they can be kept under version control and are easy to read, and they automate any computational process you can run in Python or Bash. By breaking down each DAG into precise steps, it’s easier to understand what they do, how long each step takes, and figure out why a step may not have run. Although they sound really complicated, DAGs can basically be thought of as workflows consisting of one or more tasks that have a start point and an end point. You’ve now got a named Docker container set up for Airflow that you can start whenever you need it.Īs I mentioned earlier, Airflow is based around the concept of Directed Acyclic Graphs or DAGs. Next, point your web browser to and you should see the Airflow webserver. This will fetch the latest version and install it on your machine so you can configure it.ĭocker run -name docker_airflow -d -p 8080:8080 -v /home/matt/Development/DAGs:/usr/local/airflow/dags puckel/docker-airflow webserver To pull the Puckel Docker container for Airflow open up your terminal and enter the below command. Various Airflow Docker containers exist, including an official one, but the most widely used is from a user called Puckel, which has had over 10 million downloads.

It also means there’s far less configuration required to get Airflow up and running.

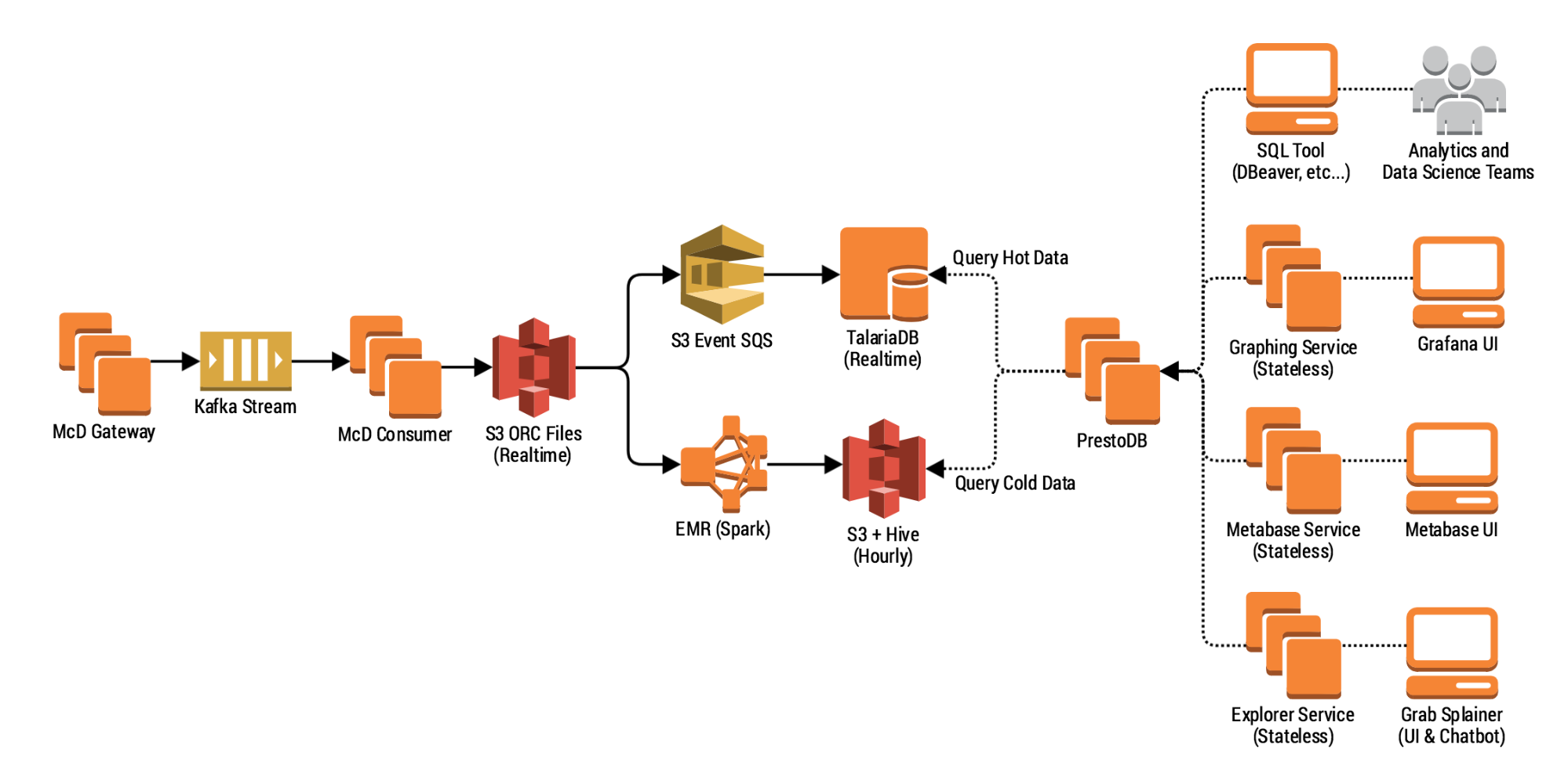

However, most companies who run Airflow are likely to do so via Docker, so we’ll follow this approach. Install the Airflow Docker containerĪs with Superset, Airflow can be installed as a Python package from PyPi. Here we’re going to install Apache Airflow, build a simple DAG, and make the data available to Apache Superset so it can be used to create a custom ecommerce business intelligence dashboard. They can also support dependencies, so if one workflow depends on the presence of data from another, it won’t run until it’s present. Not only are DAGs logical and relatively straightforward to write, they also let you use Airflow’s built in monitoring tools and can alert you when they’ve run or when they’ve failed, which the old-school Cron task approach doesn’t do. The workflows are known as Directed Acyclic Graphs, or DAGs for short, and they make life a lot easier for data engineers. In Airflow, workflows are written in Python code, which means they’re easier to read, easier to test and maintain, and can be kept in version control, rather than in a database. A workflow or data pipeline is basically a series of tasks which run in a specific order to fetch data from various systems or perform various operations so it can be used by other systems. It’s a Python-based platform designed to make it easier to create, schedule, and monitor data “workflows”, such as those in ETL (Extract Transform Load) jobs, and is similar to Oozie and Azkaban. Like Apache Superset, Apache Airflow was developed by the engineering team at Airbnb and was open sourced in 2014.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed